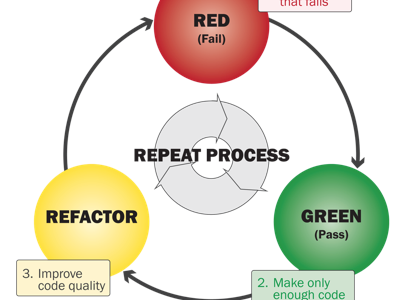

People continue to misunderstand Test-Driven Development (TDD). Kent Beck, who wrote the book on it, recently published an article called Canon TDD, which aims to remove some of those misunderstandings. This post shows an example.

Suppose you need Roman numerals in your application. Maybe you want to show years that way, or the hours of a clock, or the titles of Superbowl games. The problem we’ll look at is to calculate a string that represents the Roman representation of a number. We’ll solve that problem using Canon TDD.

The first step is to write a list of test scenarios we want to cover. We use Wikipedia as a reference to compile this list:

- Input validation: Roman numerals can’t express numbers smaller than 1 or bigger than 3999.

- Numbers are written using a subset of the Latin alphabet (I, V, X, L, C, D, and M), which each have a fixed integer value (1, 5, 10, 50, 100, 500, 1000).

- Roman numerals are constructed by appending partial solutions, e.g. 1005 = MV.

- We should always start with the highest valued symbol, e.g. 5 = V rather than IIIII.

- Subtractive notation shortens some numerals, e.g. 40 = 50 – 10 = XL rather than XXXX.

Step two is to pick one test from this list and express it in code. The order of tests matters. Some tests may force you to make a large change in your code. Always pick the next test such that you make it pass in an obvious way. It’s usually good to start with input validation, so that your programs are secure from the beginning. An additional benefit is that input validation is usually simple.

Here’s the first test:

class WhenConvertingNumbersToRomanNumerals {

@Test

void shouldRejectInexpressibleNumbers() {

assertThrows(RomanNumeral.InexpressibleException.class, () ->

RomanNumeral.from(0));

}

}

This is where we design our interface: we want a RomanNumeral class with a static method from() that accepts a number, returns a String, and throws an InexpressibleException exception on invalid input. To make the test compile, we have to add this code:

public class RomanNumeral {

public static String from(int value) {

return null;

}

public static class InexpressibleException

extends IllegalArgumentException {

}

}

This fails because there is no exception thrown.

The third step is to make the test pass. The easiest way is to unconditionally throw the expected exception:

public static String from(int value) {

throw new InexpressibleException();

}

The fourth step is to optionally refactor. There isn’t enough code yet for that to make sense.

Now we continue with the next cycle at step two. For the next test, we could add another check for invalid input, but that wouldn’t change the code, so that’s not a smart choice. Tests for items 3-5 on the list all depend on the symbols in 2, so that’s the obvious next choice:

@Test

void shouldConvertBaseSymbols() {

assertThat(RomanNumeral.from(1), is("I"));

}

This fails, as expected, with an exception. We need to throw the exception in some cases, but not others. In other words, we need an if statement:

public static String from(int value) {

if (value == 0) {

throw new InexpressibleException();

}

return "I";

}

Note how the code deals exclusively with the two tests that we wrote, and nothing else. Not only is the test coverage 100%, any other test than these two would fail. Such tests will force us to generalize the code.

Let’s first do the easy part and complete the input validation. First, generalize from 0 to non-positive numbers:

@ParameterizedTest

@ValueSource(ints = {-1, 0})

void shouldRejectInexpressibleNumbers(int value) {

assertThrows(RomanNumeral.InexpressibleException.class, () ->

RomanNumeral.from(value));

}

public static String from(int value) {

if (value <= 0) {

throw new InexpressibleException();

}

return "I";

}

With the lower bound in place, let’s add the upper bound:

@ParameterizedTest

@ValueSource(ints = {-1, 0, 4000, 4001})

void shouldRejectInexpressibleNumbers(int value) {

assertThrows(RomanNumeral.InexpressibleException.class, () ->

RomanNumeral.from(value));

}

public static String from(int value) {

if (value <= 0 || value >= 4000) {

throw new InexpressibleException();

}

return "I";

}

This works, but doesn’t look great, so let’s do some refactoring to make it more expressive:

private static final int MIN_EXPRESSIBLE = 1;

private static final int MAX_EXPRESSIBLE = 3999;

public static String from(int value) {

if (!isExpressible(value)) {

throw new InexpressibleException();

}

return "I";

}

private static boolean isExpressible(int value) {

return MIN_EXPRESSIBLE <= value && value <= MAX_EXPRESSIBLE;

}

Now we can cross item 1 of our list of tests. For our next test, we can pick from either 2 (more symbols) or 3 (additive form). The former would introduce an if statement that then generalizes into a switch with a bunch of random facts, while the latter would force us to develop a bit of an algorithm. That algorithm we can then apply to the other symbols. This sounds like an easier hill to climb than the reverse, where we would have to develop an algorithm that can deal with all the symbols. OK, here goes:

@ParameterizedTest

@CsvSource({"1,I", "2,II"})

void shouldPerformAddition(int value, String expected) {

assertThat(RomanNumeral.from(value), is(expected));

}

Note that we renamed to test to better reflect what it is that we’re testing. The simplest way to make this test pass is to generalize the constant into a variable and to add to that variable inside an if:

public static String from(int value) {

if (!isExpressible(value)) {

throw new InexpressibleException();

}

var result = "I";

if (value > 1) {

result += "I";

}

return result;

}

This looks messy, but bear with me. Things will become clearer after we add the next test:

@ParameterizedTest

@CsvSource({"1,I", "2,II", "3,III"})

void shouldPerformAddition(int value, String expected) {

assertThat(RomanNumeral.from(value), is(expected));

}

To make this pass, we have to generalize the if to a while:

public static String from(int value) {

if (!isExpressible(value)) {

throw new InexpressibleException();

}

var result = "I";

while (value > 1) {

result += "I";

value--;

}

return result;

}

If we clean up a bit, we’re starting to see an algorithm form:

public static String from(int value) {

if (!isExpressible(value)) {

throw new InexpressibleException();

}

var result = "";

while (value >= 1) {

result += "I";

value -= 1;

}

return result;

}

The constants in this piece of code are related: "I" is the symbol for 1, so the algorithm adds "I" for as long as it needs. Let’s make this relationship clearer:

public static String from(int value) {

if (!isExpressible(value)) {

throw new InexpressibleException();

}

var result = "";

var numeral = new Numeral("I", 1);

while (value >= numeral.value()) {

result += numeral.text();

value -= numeral.value();

}

return result;

}

private record Numeral(String text, int value) {

}

We can improve this code further. The from() method deals with a nice abstraction of inexpressible numbers, but also with a whole bunch of details about adding texts and subtracting numbers. So let’s extract all those details into its own method:

public static String from(int value) {

if (!isExpressible(value)) {

throw new InexpressibleException();

}

return convert(value);

}

private static String convert(int value) {

var result = "";

var numeral = new Numeral("I", 1);

while (value >= numeral.value()) {

result += numeral.text();

value -= numeral.value();

}

return result;

}

Another code smell here is that we change the value of the parameter. We can solve that in two ways. The first is to assign the parameter to a local variable and then use that variable everywhere we now use the parameter. The second is to introduce a local variable and compare it to the parameter. This turns out to be more instructive:

private static String convert(int value) {

var result = "";

var progress = 0;

var numeral = new Numeral("I", 1);

while (value - progress >= numeral.value()) {

result += numeral.text();

progress += numeral.value();

}

return result;

}

Now we can see something interesting: the combination of result and progress is very much like a Numeral. But in order to express that, we need to be able to add two Numerals:

private static String convert(int value) {

var result = new Numeral("", 0);

var numeral = new Numeral("I", 1);

while (value - result.value() >= numeral.value()) {

result = result.add(numeral);

}

return result.text();

}

private record Numeral(String text, int value) {

Numeral add(Numeral addens) {

return new Numeral(text + addens.text,

value + addens.value);

}

}

Now the contours of the algorithm are starting to become more apparent: we will build up the result by processing our numeral. Presumably, we’ll add processing of other numerals later. In order to prepare for that, let’s tidy the code a bit more by extracting the part that handles the numeral variable. If we did that on the current code, however, the extracted method would need to take result and value as parameters, in addition to numeral. That’s because this is a static method, and thus not able to use fields. Let’s fix that. First we make convert() an instance method:

public static String from(int value) {

if (!isExpressible(value)) {

throw new InexpressibleException();

}

return new RomanNumeral().convert(value);

}

private String convert(int value) {

var result = new Numeral("", 0);

var numeral = new Numeral("I", 1);

while (value - result.value() >= numeral.value()) {

result = result.add(numeral);

}

return result.text();

}

Then we can turn result and value into fields. We also rename value to target and convert() to complete() to better express their meaning:

private final int target;

private Numeral current = new Numeral("", 0);

private RomanNumeral(int target) {

this.target = target;

}

public static String from(int value) {

if (!isExpressible(value)) {

throw new InexpressibleException();

}

return new RomanNumeral(value).complete();

}

private String complete() {

var numeral = new Numeral("I", 1);

while (target - current.value() >= numeral.value()) {

current = current.add(numeral);

}

return current.text();

}

Now we can finally extract the handling of one numeral into its own method:

private String complete() {

var numeral = new Numeral("I", 1);

add(numeral);

return current.text();

}

private void add(Numeral numeral) {

while (target - current.value() >= numeral.value()) {

current = current.add(numeral);

}

}

We may even want to extract another method to express the algorithm better:

private void add(Numeral numeral) {

while (remainder() >= numeral.value()) {

current = current.add(numeral);

}

}

private int remainder() {

return target - current.value();

}

That was a lot of refactoring, but look at what it did to the design.

We have now finished item 3 on our list. Let’s continue with item 2:

@ParameterizedTest

@CsvSource({"1,I", "5,V"})

void shouldConvertBaseSymbols(int value, String expected) {

assertThat(RomanNumeral.from(value), is(expected));

}

We can make this pass by generalizing our single numeral into a list:

private String complete() {

var numerals = List.of(new Numeral("V", 5), new Numeral("I", 1));

numerals.forEach(this::add);

return current.text();

}

Note that our algorithm only works if we process the numerals from high to low (item 4 on our test list). It’s now easy to add the other base symbols:

@ParameterizedTest

@CsvSource({"1,I", "5,V", "10,X", "50,L", "100,C", "500,D", "1000,M"})

void shouldConvertBaseSymbols(int value, String expected) {

assertThat(RomanNumeral.from(value), is(expected));

}

private String complete() {

var numerals = List.of(

new Numeral("M", 1000),

new Numeral("D", 500),

new Numeral("C", 100),

new Numeral("L", 50),

new Numeral("X", 10),

new Numeral("V", 5),

new Numeral("I", 1));

numerals.forEach(this::add);

return current.text();

}

This concludes items 2 and 4 of our test list. The only thing left to do is item 5:

@ParameterizedTest

@CsvSource({"4,IV"})

void shouldShortenWithSubtractiveNotation(int value, String expected) {

assertThat(RomanNumeral.from(value), is(expected));

}

We can make this pass simply by adding that numeral explicitly:

private String complete() {

var numerals = List.of(

new Numeral("M", 1000),

new Numeral("D", 500),

new Numeral("C", 100),

new Numeral("L", 50),

new Numeral("X", 10),

new Numeral("V", 5),

new Numeral("IV", 4),

new Numeral("I", 1));

numerals.forEach(this::add);

return current.text();

}

While that works, it isn’t pretty. There is duplication in the values, since "IV" == "V" - "I" == 5 - 1 == 4. Let’s express that better:

private String complete() {

var v = new Numeral("V", 5);

var i = new Numeral("I", 1);

var numerals = List.of(

new Numeral("M", 1000),

new Numeral("D", 500),

new Numeral("C", 100),

new Numeral("L", 50),

new Numeral("X", 10),

v,

v.subtract(i),

i);

numerals.forEach(this::add);

return current.text();

}

private record Numeral(String text, int value) {

public Numeral subtract(Numeral subtrahens) {

return new Numeral(subtrahens.text + text,

value - subtrahens.value);

}

}

The others are similar:

@ParameterizedTest

@CsvSource({"4,IV", "9,IX", "40,XL", "90,XC", "400,CD", "900,CM"})

void shouldShortenWithSubtractiveNotation(int value, String expected) {

assertThat(RomanNumeral.from(value), is(expected));

}

private String complete() {

var i = new Numeral("I", 1);

var v = new Numeral("V", 5);

var x = new Numeral("X", 10);

var l = new Numeral("L", 50);

var c = new Numeral("C", 100);

var d = new Numeral("D", 500);

var m = new Numeral("M", 1000);

var numerals = List.of(

m,

m.subtract(c),

d,

d.subtract(c),

c,

c.subtract(x),

l,

l.subtract(x),

x,

x.subtract(i),

v,

v.subtract(i),

i);

numerals.forEach(this::add);

return current.text();

}

Now we see another form of duplication in the way the list of numerals is constructed. There is a pattern that repeats itself for every power of 10. We should extract that pattern:

private String complete() {

var i = new Numeral("I", 1);

var v = new Numeral("V", 5);

var x = new Numeral("X", 10);

var l = new Numeral("L", 50);

var c = new Numeral("C", 100);

var d = new Numeral("D", 500);

var m = new Numeral("M", 1000);

var numerals = new TreeSet<>(comparing(Numeral::value).reversed());

numerals.addAll(includeSubtractives(i, v, x));

numerals.addAll(includeSubtractives(x, l, c));

numerals.addAll(includeSubtractives(c, m, d));

numerals.forEach(this::add);

return current.text();

}

private Collection<Numeral> includeSubtractives(Numeral one,

Numeral five, Numeral ten) {

return List.of(

one,

five.subtract(one),

five,

ten.subtract(one),

ten);

}

Note that we have to use a set to remove duplicate numerals and we need to sort that set in the correct order for the algorithm to work.

We’re still not done, though. The way we add the pattern of subtractives isn’t random; there’s a pattern to that too:

private String complete() {

var i = new Numeral("I", 1);

var v = new Numeral("V", 5);

var x = new Numeral("X", 10);

var l = new Numeral("L", 50);

var c = new Numeral("C", 100);

var d = new Numeral("D", 500);

var m = new Numeral("M", 1000);

var baseNumerals = List.of(i, v, x, l, c, d, m);

var numerals = new TreeSet<>(comparing(Numeral::value).reversed());

for (var index = 0; index < baseNumerals.size() - 1; index += 2) {

numerals.addAll(includeSubtractives(

baseNumerals.get(index),

baseNumerals.get(index + 1),

baseNumerals.get(index + 2)));

}

numerals.forEach(this::add);

return current.text();

}

We can clean that up further by extracting the base numerals into a constant:

private static final List<Numeral> BASE_NUMERALS = List.of(

new Numeral("I", 1),

new Numeral("V", 5),

new Numeral("X", 10),

new Numeral("L", 50),

new Numeral("C", 100),

new Numeral("D", 500),

new Numeral("M", 1000));

private String complete() {

var numerals = new TreeSet<>(comparing(Numeral::value).reversed());

for (var index = 0; index < BASE_NUMERALS.size() - 1; index += 2) {

numerals.addAll(includeSubtractives(

BASE_NUMERALS.get(index),

BASE_NUMERALS.get(index + 1),

BASE_NUMERALS.get(index + 2)));

}

numerals.forEach(this::add);

return current.text();

}

And extracting the creation of numerals into its own method:

private String complete() {

numerals().forEach(this::add);

return current.text();

}

private Collection<Numeral> numerals() {

var result = new TreeSet<>(comparing(Numeral::value).reversed());

for (var index = 0; index < BASE_NUMERALS.size() - 1; index += 2) {

result.addAll(includeSubtractives(

BASE_NUMERALS.get(index),

BASE_NUMERALS.get(index + 1),

BASE_NUMERALS.get(index + 2)));

}

return result;

}

The code now expresses all concepts well and our test list is empty, so we’re done.

Here’s the complete solution:

import java.util.*;

import static java.util.Comparator.comparing;

public class RomanNumeral {

private static final int MIN_EXPRESSIBLE = 1;

private static final int MAX_EXPRESSIBLE = 3999;

private static final List<Numeral> BASE_NUMERALS = List.of(

new Numeral("I", 1),

new Numeral("V", 5),

new Numeral("X", 10),

new Numeral("L", 50),

new Numeral("C", 100),

new Numeral("D", 500),

new Numeral("M", 1000));

private final int target;

private Numeral current = new Numeral("", 0);

private RomanNumeral(int target) {

this.target = target;

}

public static String from(int value) {

if (!isExpressible(value)) {

throw new InexpressibleException();

}

return new RomanNumeral(value).complete();

}

private static boolean isExpressible(int value) {

return MIN_EXPRESSIBLE <= value && value <= MAX_EXPRESSIBLE;

}

private String complete() {

numerals().forEach(this::add);

return current.text();

}

private Collection<Numeral> numerals() {

var result = new TreeSet<>(comparing(Numeral::value).reversed());

for (var index = 0; index < BASE_NUMERALS.size() - 1; index += 2) {

result.addAll(includeSubtractives(

BASE_NUMERALS.get(index),

BASE_NUMERALS.get(index + 1),

BASE_NUMERALS.get(index + 2)));

}

return result;

}

private Collection<Numeral> includeSubtractives(Numeral one,

Numeral five, Numeral ten) {

return List.of(

one,

five.subtract(one),

five,

ten.subtract(one),

ten);

}

private void add(Numeral numeral) {

while (remainder() >= numeral.value()) {

current = current.add(numeral);

}

}

private int remainder() {

return target - current.value();

}

public static class InexpressibleException

extends IllegalArgumentException {

}

private record Numeral(String text, int value) {

Numeral add(Numeral addens) {

return new Numeral(text + addens.text,

value + addens.value);

}

public Numeral subtract(Numeral subtrahens) {

return new Numeral(subtrahens.text + text,

value - subtrahens.value);

}

}

}

You must be logged in to post a comment.